Here in the USA, you have to be afraid for your job these days. Layoffs are rampant everywhere due to outsourcing, and now we have AI on the horizon promising to make things more efficient, but we really know what it is actually going to be used for. They want automate out everything. People packaging up goods for shipping, white collar jobs like analytics, business intelligence, customer service, chat support. Any sort of job that takes a low or moderate amount of effort or intellectual ability is threatened by AI. But once AI takes all these jobs away and shrinks the amount of labor required, what are all these people going to do for work? It’s not like you can train someone who’s a business intelligence engineer easily to go do something else like HVAC, or be a nurse. So you have the entire tech industry basically folding in on itself trying to win the rat race and get the few remaining jobs left over…

But it should be pretty obvious that you can’t run an entire society with no jobs. Because then people can’t buy groceries, groceries don’t sell so grocery stores start hurting and then they can’t afford to employ cashiers and stockers, and the entire thing starts crumbling. This is the future of AI, basically. The more we automate, the less people can do, so they don’t have jobs and no income, not able to survive…

Like, how long until we realize how detrimental AI is to society? 10 years? 15?

Technology like the loom, the steam shovel, the aeroplane, rocketry, computers, nuclear energy, the internet, and now AI, are each tools that have really changed our world, and put many different people out of work, but it has also reduced a lot of back-breaking, time-consuming work, so it has allowed our world to go a lot faster. From an excavator being able to move a lot more dirt in a day than 5 men with shovels, AI can help with getting the initial ideas of the creative process, can help with parsing initial queries from customers, a first pass filter of a huge repository of legal documents, be a patient teacher for beginner programming or other subjects, and so on. Each tool can have been overpromised to do everything, but that doesn’t mean it had no purpose.

With that said, any of these tools and technologies can be used for bad as much as they can be used for good. And combatting that doesn’t just mean waiting around hoping for the people entrenched in power using tech to satiate their own personal gain, to suddenly reject their gains to commit them for the good of society. It means organizing to protect your neighbour. It means sharing the benefit of these tools with others, using them for good, and improving them for others.

My point is that it’s not AI that will cause society to crash, it’s greed and corporate greed, who are being assisted by the unrealistic hype over AI.

Replace AI in your argument with industrial machinery, and you’ll get your answer. People have always had similar concerns about automation. There are some problems, but it isn’t with the technology itself.

The first problem is the concentration of wealth. Societal automation efforts need to start to be viewed as something belonging to everyone, and the profits generated need to go back in to supporting society. This’ll need to be solved to move forward peacefully.

The second problem is failure to deal with externalities. The true cost of automation needs to be accounted for from cradle to grave including all externalities. This means the pollution caused by LLM energy use needs to be a part of the cost of running the LLM, for example.

You may be in the younger side, or just not remember, but this happens almost every 20 years like clockwork.

In the 80’s it was the PC and computers at large.

In the 00’s it was robotic automation that was going to be the end of manual labor.

Now it’s this.

The sooner people realize that all of things are just about the small number of wealthy people who control resources making more money at the expense of the majority of all other humans, maybe something will get done. It’s been tried before in various movements with little to show for it, but maybe I’m just cynical.

There will need to be a major shift in how economic flow works in order to support an existing or expanding population regardless.

What do you think happened to building full of engineers designing plans and making stress load calculations? What do you think happened to switchboard operators?

Society can exist without jobs, not everything has to be capital, in fact reaching a post scarcity world is needed for communism.

AI hype is also overblown as fuck, I remember watching the CGP grey video Humans Need not Apply, like what, 8 years ago? Haven’t really achieved some epic breakthrough did we?

For me from a software engineers perspective, “AI” is nothing but a productivity tool, it reduces the amount of mundane work I have to do, but then so does the IDE I use.

as humans we have been automatic tasks for a long time, just think about your washing machine, you have any idea how hard it would be to have clean clothes without them? Do you think we would be better off if we needed cleaning services that clean our clothes for us using human labour just so people have jobs? Or is it better to use that effort elsewhere?

This is the part of the AI conversation that always bugs me. People have just concluded that the hype is real and we’ve reached the point that people fear in movies. They don’t understand that it’s mostly bullshit. Sure, the fancy autocomplete can toss up some boilerplate code and it’s mostly ok. Sure, it saves me time scrolling through StackOverflow search results.

But it’s simply not this all-knowing miracle replacement for everything. I think everyone has been conditioned by entertainment to fear the worst. When that bubble bursts, IT will be the part which wreaks havoc on the economy.

“AI” returns mathematically plausible results from its tokenized training data. That is the ONLY thing it does. It doesn’t consider, it doesn’t fact check itself. “AI” in its current state is a party trick.

No matter what, it helps me incredibly.

It’s saving me a hell of a lot of man hours on incredibly tedious tasks that would require looking up individual items in a wiki or the like and then directly populating the answers into a spreadsheet… Our team doesn’t have the budget to hire someone to do it, so it basically just wouldn’t get done without it.

Useful party trick for me!

Your last sentence diminishes the value of the first sentence. These LLMs save me a ton of time and massively increase my productivity.

They’re starting to add options to cite references, consult documentation, some of the engines actually check their source code to make sure it’s viable.

Now that they’ve hit stumbling blocks on organically improving, all those things you’re talking about can be done with conventional techniques.

Just to be clear, I’m not saying it isn’t useful, I’m just tired of hearing people say that the code “thinks”.

People had the same fears about cars, the internet, computers, telephones, the printing press, and even just books and reading/writing.

It capitalism. Capitalism will replace you with a machine. AI is just a tool.

For now, I work in AI.

IMO, using AI to remove jobs is the business equivalent of the Darwin Award. No sane executive will look at AI and see job replacement. A dumb executive will look at AI and see more productivity gains. A smart executive will see AI as a way to improve tooling for workers that explicitly want to use AI.

Sadly, as with most tech improvements, we’ll see lots of companies run by stupid people try to do stupid things with it. The best we can hope for is that there are opportunities for people to bail and find better job opportunities when their employer says “let’s fire HR and replace with GPT”, only to get absolutely brutalized by legal fees when their AI HR decides to fire someone for a protected reason, or refuses to fire a thief because they have a disability, or something that requires human intervention that doesn’t exist, or one of the hundreds of ways that it could go hilariously wrong.

It happens all the time. I remember watching solid profitable tech companies pivoting to delivering large apps on the new iPhone app store because “it’s the future”, only to realise that spending two years to develop an office suite for the iPhone 4 was a fucking stupid idea in hindsight. I remember people firing web developers because WYSIWYG editors would mean that you could design and build a website in the same way you create a Word doc. Stupid execs will always do stupid shit, and the world will move on.

Yup.

Some guys I know who worked at a developer contracting house (that I briefly worked for as well) all lost their jobs over the course of a year or so, as the company started rapidly downsizing because “Copilot means we don’t need as many developers anymore, we can fill orders with a skeleton crew.”

I’m excited to see that company fail for their bullshit.

I can answer that. We won’t.

We’ll keep iterating and redesigning until we have actual working general intelligence AI. Once we’ve created a general intelligence it will be a matter of months or years before it’s a super intelligence that far outshines human capabilities. Then you have a whole new set of dilemmas. We’ll struggle with those ethical and social dilemmas for some amount of time until the situation flips and the real ethical dilemmas will be shouldered by the AIs: how long do we keep these humans around? Do we let them continue to go to war with each other? do they own this planet? Etc.

Assuming we can get AGI. So far there’s been little proof we’re any closer to getting an AI that can actually apply logic to problems that aren’t popular enough to be spelled out a dozen times in the dataset it’s trained on. Ya know, the whole perfect scores on well known and respected collage tests, but failing to solve slightly altered riddles for children? It being literally incapable of learning new concepts is a pretty major pitfall if you ask me.

I’m really sick and tired of this “we just gotta make a machine that can learn and then we can teach it anything” line. It’s nothing new, people were saying this shit since fucking 1950 when Alan Turing wrote it in a paper. A machine looking at an unholy amount of text and evaluation based on a new prompt, what is the most likely word to follow, IS NOT LEARNING!!! I was sick of this dilema before LLMs were a thing, but now it’s just mind numbing.

AI developers are like the modern version of alchemists. If they can just turn this lead into gold, this one simple task, they’ll be rich and powerful for the rest of their lives!

Transmutation isn’t really possible, not the way they were trying to do it. Perhaps AI isn’t possible the way we’re trying to do it now, but I doubt that will stop many people from trying. And I do expect that it will be possible somehow, we’ll likely get there someday, just not soon.

deleted by creator

Energy demands are only going to increase as we replace gas with electric alternatives. The problem you’re pointing to is an issue with the current infrastructure.

deleted by creator

No, in this case I’m referring to the electric grid and what powers it.

deleted by creator

You’re missing or ignoring my point. If the energy is provided by carbon neutral sources, then the amount used is irrelevant. That should be our goal. And I don’t know where you’re getting the notion that water resources are " boiled dry" or that gpu heat has any meaningful impact on the climate, but those aren’t actual issues.

And for the record, I’m not defending LLMs or generative images here. That bubble would be better off bursting, but the energy use isn’t why. Hell, it may be the only good aspect of the whole thing. With MS booting up old nuclear reactors, maybe it will revitalize interest so can make some use of that technology.

deleted by creator

Depends on your definition of “we”…

Automating jobs away is a good thing, many others here have explained why. When I read your title, I actually expected you would be writing about how AI is “detrimental to society” because it makes mistakes that humans don’t make and is therefore useless for anything serious; this, I would have had a harder time arguing against.

But AI is only going to get better in the long run. How is that a solid argument?

Automating jobs away is a good thing

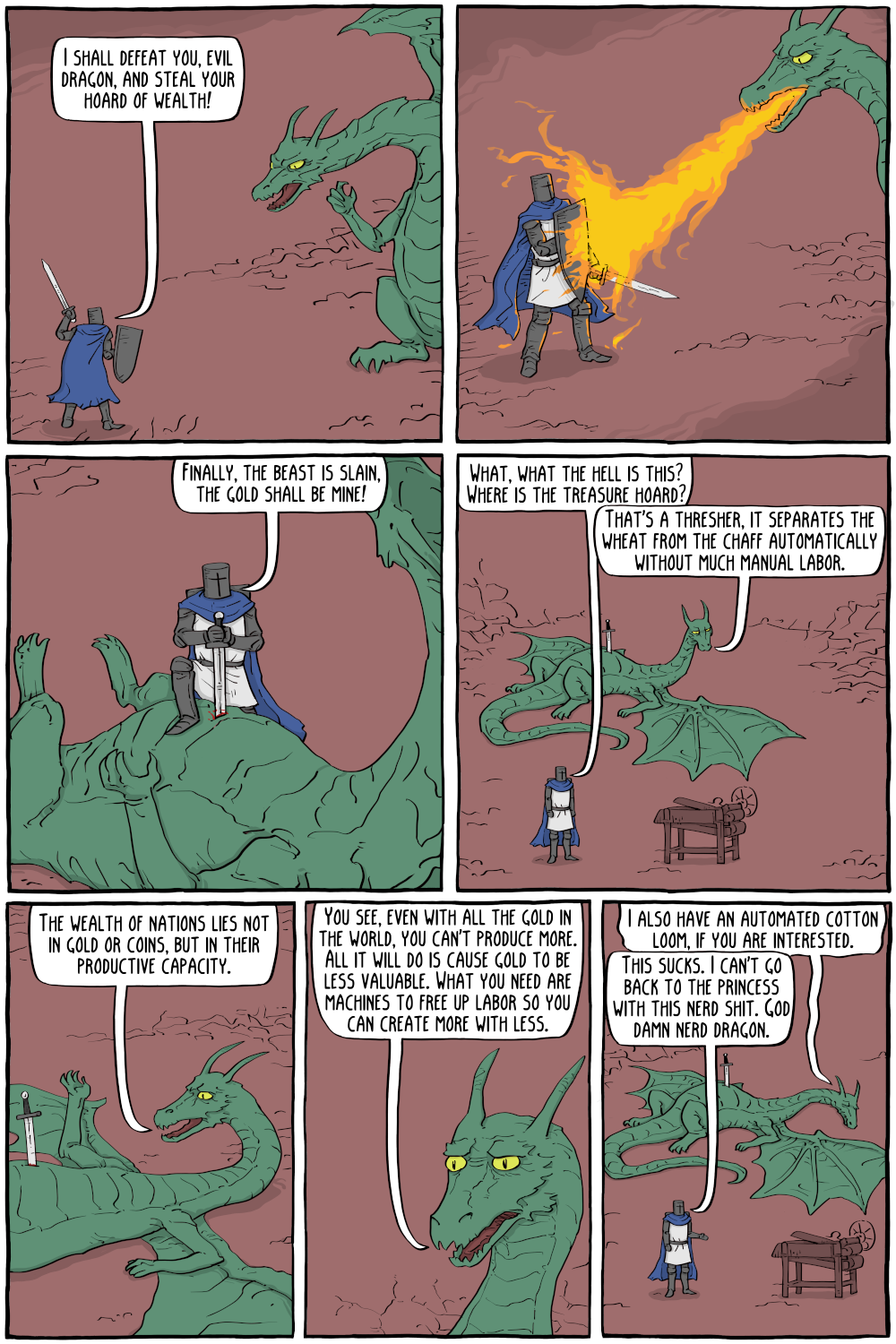

I remember seeing someone post this comic a while back, thought it was a pithy explanation.

https://static.existentialcomics.com/comics/TheWealthofDragons.png

now we have AI on the horizon promising to make things more efficient

sounds good

but we really know what it is actually going to be used for

Contradicts the first statement and the next statement

They want automate out everything. People packaging up goods for shipping, white collar jobs like analytics, business intelligence, customer service, chat support. Any sort of job that takes a low or moderate amount of effort or intellectual ability is threatened by AI.

OK you do know what they want to use it for.

But once AI takes all these jobs away and shrinks the amount of labor required, what are all these people going to do for work? It’s not like you can train someone who’s a business intelligence engineer easily to go do something else like HVAC, or be a nurse.

Highly untrainable people have always existed and are always the first to get replaced.

But it should be pretty obvious that you can’t run an entire society with no jobs.

Well not one based on capitalism.

The more we automate, the less people can do, so they don’t have jobs and no income, not able to survive…

Well the ones that can’t do research and can’t look up history maybe. AI is the new Robots, is the new assembly line is the new…

You are just using the age old technology fear narrative.

When Robots Take All of Our Jobs, Remember the Luddites (2017)

Is AI really only detrimental to society? We’re in the initial stages where they promise the world in order to get investors attention. But once the investors realize what it’s actually capable of they’ll have to focus on what it’s actually capable of.

I think sometime next year we’ll have a crash, and all the companies pushing AI will be forced to either focus on quality, or find the next thing to push.

What is AI, according to you?

It’s a marketing term, aimed to create a void. So I wonder what products you think fills this void.

Ugh, I hate that you’re right about this. It used to mean a topic of study in computer science. Now it means…I don’t even know what it’s supposed to mean.