Serious answer: Posits seem cool, like they do most of what floats do, but better (in a given amount of space). I think supporting them in hardware would be awesome, but of course there’s a chicken and egg problem there with supporting them in programming languages.

Posits aside, that page had one of the best, clearest explanations of how floating point works that I’ve ever read. The authors of my college textbooks could have learned a thing or two about clarity from this writer.

I had the great honour of seeing John Gustafson give a presentation about unums shortly after he first proposed posits (type III unums). The benefits over floating point arithmetic seemed incredible, and they seemed largely much more simple.

I also got to chat with him about “Gustafson’s Law”, which kinda flips Amdahl’s Law on its head. Parallel computing has long been a bit of an interest for me I was also in my last year of computer science studies then and we were covering similar subjects at the time. I found that timing to be especially amusing.

No real use you say? How would they engineer boats without floats?

Just invert a sink.

Based and precision pilled.

I know this is in jest, but if 0.1+0.2!=0.3 hasn’t caught you out at least once, then you haven’t even done any programming.

Me making my first calculator in c.

what if i add more =

IMO they should just remove the equality operator on floats.

That should really be written as the gamma function, because factorial is only defined for members of Z. /s

Floats are only great if you deal with numbers that have no needs for precision and accuracy. Want to calculate the F cost of an a* node? Floats are good enough.

But every time I need to get any kind of accuracy, I go straight for actual decimal numbers. Unless you are in extreme scenarios, you can afford the extra 64 to 256 bits in your memory

As a programmer who grew up without a FPU (Archimedes/Acorn), I have never liked float. But I thought this war had been lost a long time ago. Floats are everywhere. I’ve not done graphics for a bit, but I never saw a graphics card that took any form of fixed point. All geometry you load in is in floats. The shaders all work in floats.

Briefly ARM MCU work was non-float, but loads of those have float support now.

I mean you can tell good low level programmers because of how they feel about floats. But the battle does seam lost. There is lots of bit of technology that has taken turns I don’t like. Sometimes the market/bazaar has spoken and it’s wrong, but you still have to grudgingly go with it or everything is too difficult.

But if you throw an FPU in water, does it not sink?

It’s all lies.

I’d have to boulder check, but I think old handheld consoles like the Gameboy or the DS use fixed-point.

I’m pretty sure they do, but the key word there is “old”.

Floats make a lot of math way simpler, especially for audio, but then you run into the occasional NaN error.

On the PS3 cell processor vector units, any NaN meant zero. Makes life easier if there is errors in the data.

Float is bloat!

While we’re at it, what the hell is -0 and how does it differ from 0?

It’s the negative version

For integers it really doesn’t exist. An algorithm for multiplying an integer with -1 is: Invert all bits and add 1 to the right-most bit. You can do that for 0 of course, it won’t hurt.

I have been thinking that maybe modern programming languages should move away from supporting IEEE 754 all within one data type.

Like, we’ve figured out that having a

nullvalue for everything always is a terrible idea. Instead, we’ve started encoding potential absence into our type system withOptionorResulttypes, which also encourages dealing with such absence at the edges of our program, where it should be done.Well,

NaNisnullall over again. Instead, we could make the division operator an associated function which returns aResult<f64>and disallowf64from ever beingNaN.My main concern is interop with the outside world. So, I guess, there would still need to be a IEEE 754 compliant data type. But we could call it

ieee_754_f64to really get on the nerves of anyone wanting to use it when it’s not strictly necessary.Well, and my secondary concern, which is that AI models would still want to just calculate with tons of floats, without error-handling at every intermediate step, even if it sometimes means that the end result is a shitty vector of

NaNs, that would be supported with that, too.I agree with moving away from

floats but I have a far simpler proposal… just use a struct of two integers - a value and an offset. If you want to make it an IEEE standard where the offset is a four bit signed value and the value is just a 28 or 60 bit regular old integer then sure - but I can count the number of times I used floats on one hand and I can count the number of times I wouldn’t have been better off just using two integers on -0 hands.Floats specifically solve the issue of how to store a ln absurdly large range of values in an extremely modest amount of space - that’s not a problem we need to generalize a solution for. In most cases having values up to the million magnitude with three decimals of precision is good enough. Generally speaking when you do float arithmetic your numbers will be with an order of magnitude or two… most people aren’t adding the length of the universe in seconds to the width of an atom in meters… and if they are floats don’t work anyways.

I think the concept of having a fractionally defined value with a magnitude offset was just deeply flawed from the get-go - we need some way to deal with decimal values on computers but expressing those values as fractions is needlessly imprecise.

While I get your proposal, I’d think this would make dealing with float hell. Do you really want to

.unwrap()every time you deal with it? Surely not.One thing that would be great, is that the

/operator could work betweenResultandf64, as well as betweenResultandResult. Would be like doing a.map(|left| left / right)operation.Well, not every time. Only if I do a division or get an

ieee_754_f64from the outside world. That doesn’t happen terribly often in the applications I’ve worked on.And if it does go wrong, I do want it to explode right then and there. Worst case would be, if it writes random

NaNs into some database and no one knows where they came from.As for your suggestion with the slash accepting

Results, yeah, that could resolve some pain, but I’ve rarely seen multiple divisions being necessary back-to-back and I don’t want people passing around aResult<f64>in the codebase. Then you can’t see where it went wrong anymore either.

So, personally, I wouldn’t put that division operator into the stdlib, but having it available as a library, if someone needs it, would be cool, yeah.

Nan isn’t like null at all. It doesn’t mean there isn’t anything. It means the result of the operation is not a number that can be represented.

The only option is that operations that would result in nan are errors. Which doesn’t seem like a great solution.

Well, that is what I meant. That

NaNis effectively an error state. It’s only likenullin that any float can be in this error state, because you can’t rule out this error state via the type system.Why do you feel like it’s not a great solution to make

NaNan explicit error?Theres plenty of cases where I would like to do some large calculation that can potentially give a NaN at many intermediate steps. I prefer to check for the NaN at the end of the calculation, rather than have a bunch of checks in every intermediate step.

How I handle the failed calculation is rarely dependent on which intermediate step gave a NaN.

This feels like people want to take away a tool that makes development in the engineering world a whole lot easier because “null bad”, or because they can’t see the use of multiplying 1e27 with 1e-30.

Well, I’m not saying that I want to take tools away. I’m explicitly saying that a

ieee_754_f64type could exist. I just want it to be named annoyingly, so anyone who doesn’t know why they should use it, will avoid it.If you chain a whole bunch of calculations where you don’t care for

NaN, that’s also perfectly unproblematic. I just think, it would be helpful to:- Nudge people towards doing a

NaNcheck after such a chain of calculations, because it can be a real pain, if you don’t do it. - Document in the type system that this check has already taken place. If you know that a float can’t be

NaN, then you have guarantees that, for example, addition will never produce aNaN. It allows you to remove some of the defensive checks, you might have felt the need to perform on parameters.

Special cases are allowed to exist and shouldn’t be made noticeably more annoying. I just want it to not be the default, because it’s more dangerous and in the average applications, lots of floats are just passed through, so it would make sense to block

NaNs right away.- Nudge people towards doing a

It doesn’t have to “error” if the result case is offered and handled.

Float processing is at the hardware level. It needs a way to signal when an unrepresented value would be returned.

My thinking is that a call to the safe division method would check after the division, whether the result is a

NaN. And if it is, then it returns an Error-value, which you can handle.Obviously, you could do the same with a

NaNby just throwing an if-else after any division statement, but I would like to enforce it in the type system that this check is done.I feel like that’s adding overhead to every operation to catch the few operations that could result in a nan.

But I guess you could provide alternative safe versions of float operations to account for this. Which may be what you meant thinking about it lol

I would want the safe version to be the default, but yeah, both should exist. 🙃

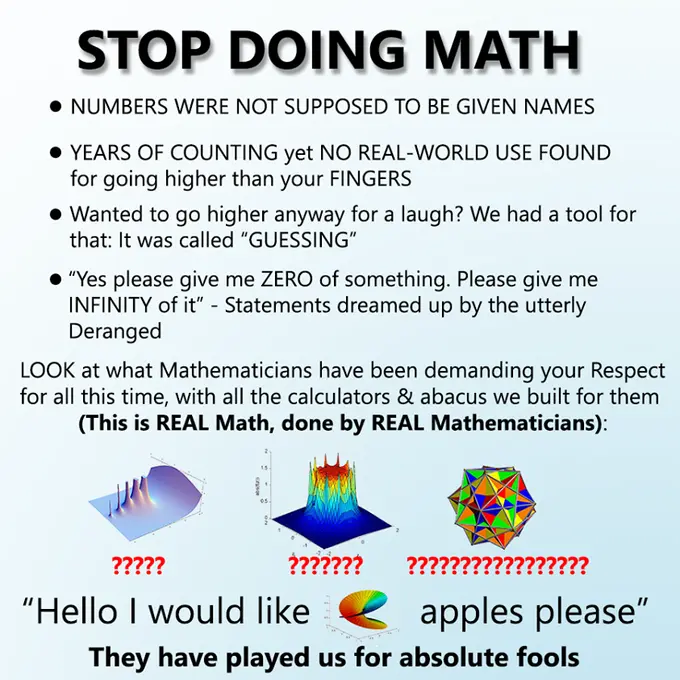

From time to time I see this pattern in memes, but what is the original meme / situation?

It’s my favourite format. I think the original was ‘stop doing math’

Thank you 😁

Out of topic but how does one get a profile pic on lemmy? Also love you ken.

Thank you!

Go to “Settings” (cog wheel) and then “Avatar”:

deleted by creator

That doesn’t really answer the question, which is about the origins of the meme templete

Yikes. placed this in the wrong spot. Thank you.

Precision piled.

I’m like, it’s that code on the right what I think it is? And it is! I’m so happy now

There are probably a lot of scientific applications (e.g. statistics, audio, 3D graphics) where exponential notation is the norm and there’s an understanding about precision and significant digits/bits. It’s a space where fixed-point would absolutely destroy performance, because you’d need as many bits as required to store your largest terms. Yes, NaN and negative zero are utter disasters in the corners of the IEEE spec, but so is trying to do math with 256bit integers.

For a practical explanation about how stark a difference this is, the PlayStation (one) uses an integer z-buffer (“fixed point”). This is responsible for the vertex popping/warping that the platform is known for. Floating-point z-buffers became the norm almost immediately after the console’s launch, and we’ve used them ever since.

While it’s true the PS1 couldn’t do floating point math, it did NOT have a z-buffer at all.

The meme is right for once

Obviously floating point is of huge benefit for many audio dsp calculations, from my observations (non-programmer, just long time DAW user, from back in the day when fixed point with relatively low accumulators was often what we had to work with, versus now when 64bit floating point for processing happens more as the rule) - e.g. fixed point equalizers can potentially lead to dc offset in the results. I don’t think peeps would be getting as close to modeling non-linear behavior of analog processors with just fixed point math either.

Audio, like a lot of physical systems, involve logarithmic scales, which is where floating-point shines. Problem is, all the other physical systems, which are not logarithmic, only get to eat the scraps left over by IEEE 754. Floating point is a scam!