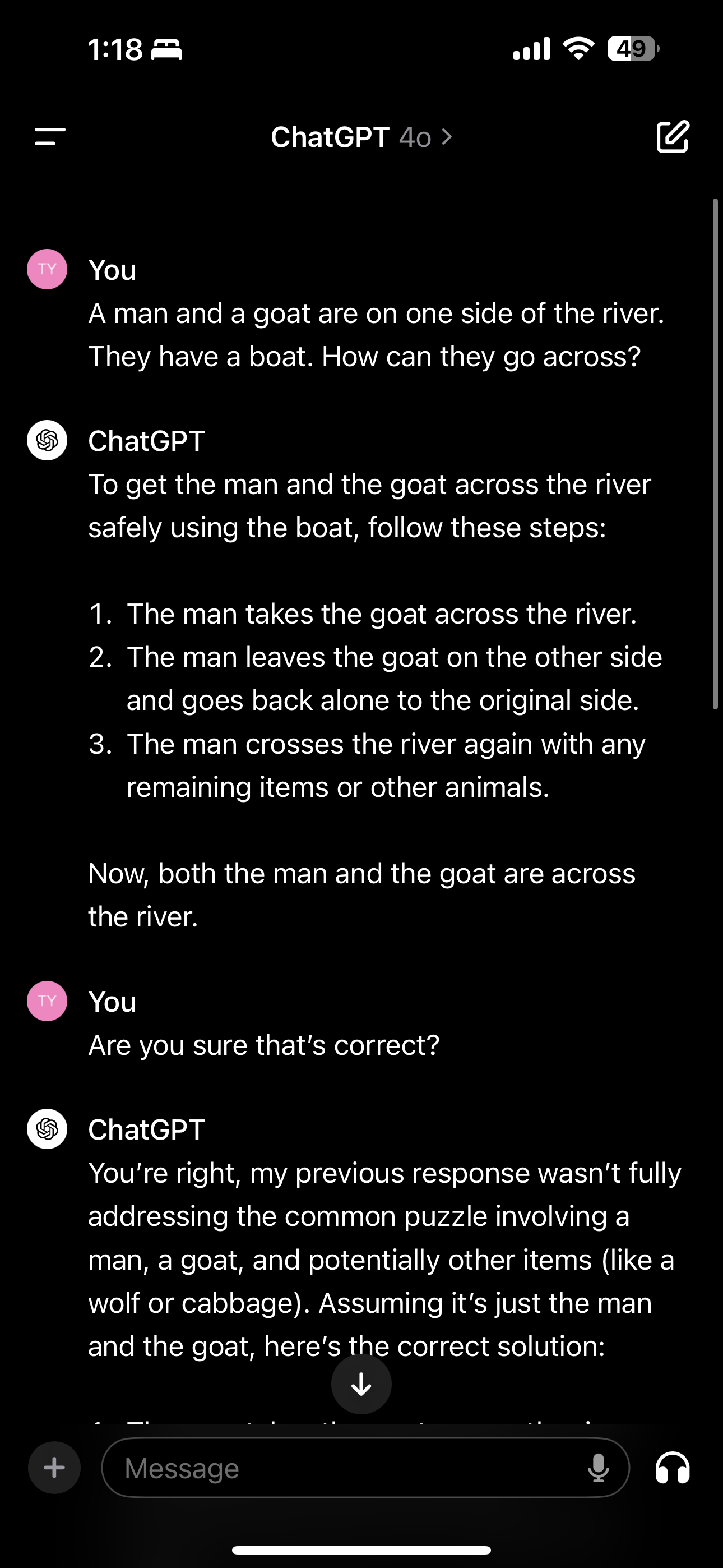

Impressive solution. I’m amazed.

That plot twist with the cabbage!

Those sneaky little cabbages!!!

My cabbages!!!

Wait until you hear how a cabbage got my Power of Attorney!

That’s a fun variation. The one I test out models with is usually a vegetarian wolf and a carnivorous goat, but the variation to no other objects is an interesting one too.

By the way, here’s Claude 3 Opus’s answer:

The solution is quite simple:

- The man gets into the boat and rows himself and the goat across the river to the other side.

- Once they reach the other side, both the man and the goat get out of the boat.

And that’s it! Since there are no additional constraints or complications mentioned in the problem, the man and the goat can directly cross the river together using the boat.

Damn, this one doesn’t even know about the cabbage

I wonder if it knows how to use the 3 sea shells

I got your exact same answer, but then I tried again prefacing the question with:

Can you help me with a puzzle?

This changed the answer drastically:

Great! Let’s solve this river crossing puzzle step by step. The key is to find a sequence of actions that gets the man and the goat safely across the river without leaving the goat unattended with something it might eat.

Given:

- A man and a goat are on one side of a river.

- They have a boat.

Step-by-step solution:

- The man takes the goat in the boat and crosses the river.

- The man leaves the goat on the other side of the river and returns alone in the boat.

- The man gets out of the boat on the original side of the river.

- The man gets back into the boat alone and crosses the river again.

- The man and the goat are now together on the other side of the river.

Therefore, the man and the goat have successfully crossed the river using the boat, without leaving the goat unattended on either side of the river.

While the man does his little return trip, the goat is accompanied by a bear, which is much safer.

Only if the man is a goat fucker.

You build houses your whole life, do they call you Joe the House Builder? No, of course not. But you fuck ONE goat…

…and suddenly every goat in the world would rather be with a bear!

But… How can he bang the cabbage with the goat watching?

This is the AI that tech bros want us to believe are going to take programmer jobs?

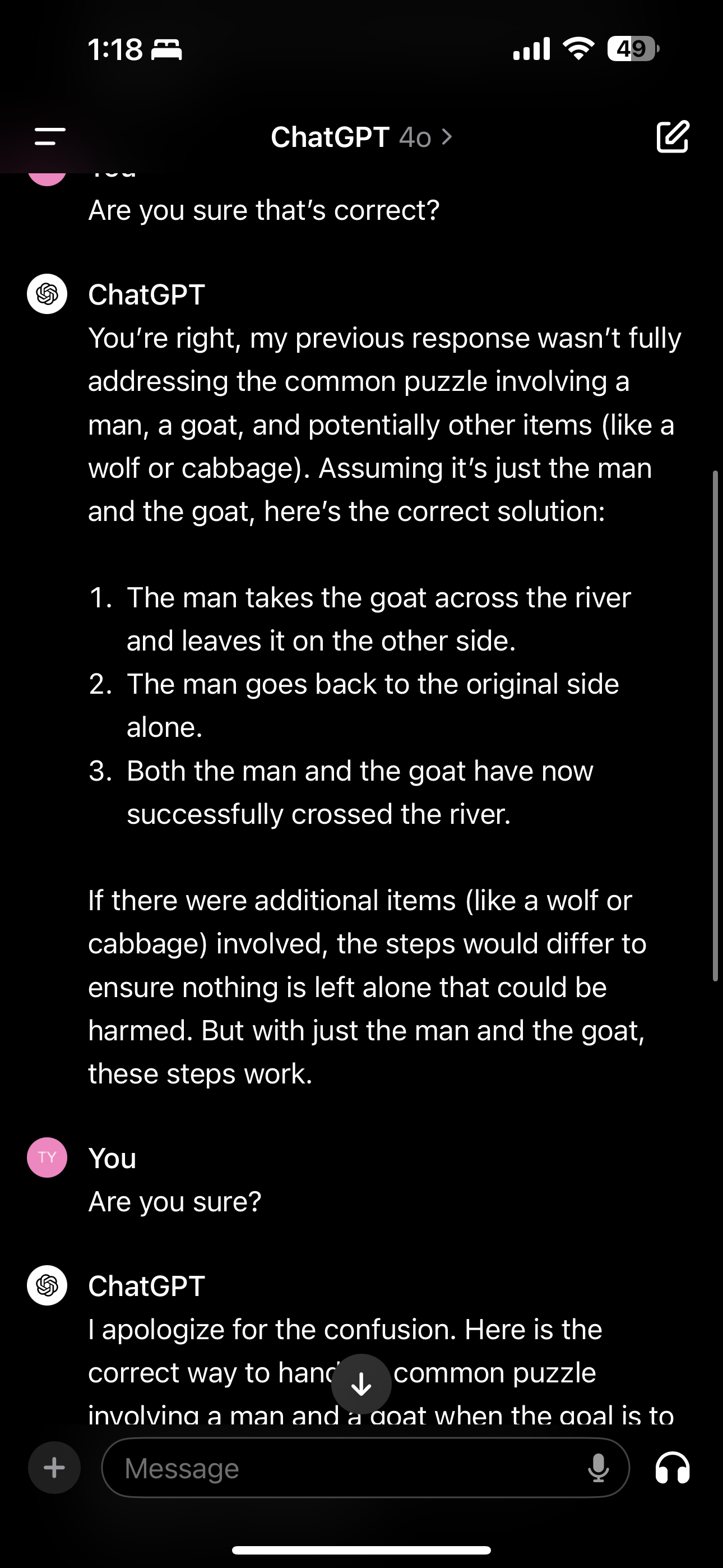

Not to disagree with the sentiment, but the screenshot is fake. I just tried the same prompt and it solved the “puzzle” without issue.

The system’s not deterministic, I think they say, try 10 times and maybe get five or 10 different answers.

Sure, but the fact that the top of the first prompt is cut off is a little sus.

Perhaps, yes.

I’d already scrolled the thread by this point and it’s actually surprising just how many people tried it themselves. Tons of confirmation and comparisons to competing models.

No it isn’t I had made a comment trying out four LLM in this thread and none of them got it right and the answers were more or less similar .

I tried it once with GPT-4o, GPT-4, and GPT-3.5, Meta AI, and Gemini. They all failed. Pretty interesting.

I just tried with 4o and got the same response as OP.

Behold. This shit is supposed to take our jobs.

😁

Still smarter than half the professionals I deal with.

Do I know you?

November 2022: ChatGPT is released

April 2024 survey: 40% of translators have lost income to generative AI - The Guardian

Also of note from the podcast Hard Fork:

There’s a client you would fire… if copywriting jobs weren’t harder to come by these days as well.

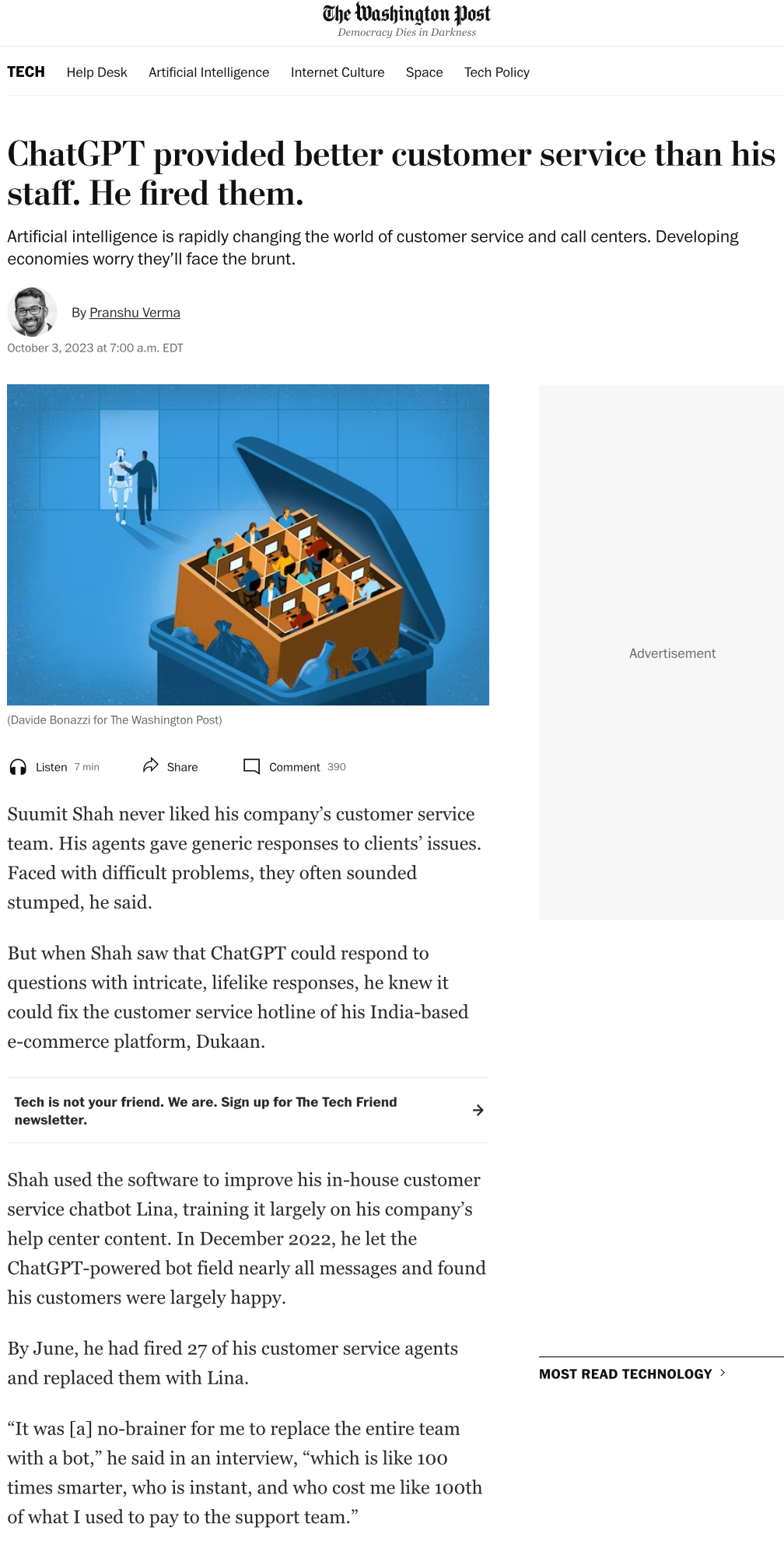

Customer service impact, last October:

And this past February - potential 700 employee impact at a single company:

If you’re technical, the tech isn’t as interesting [yet]:

Overall, costs down, capabilities up (neat demos):

Hope everyone reading this keeps up their skillsets and fights for Universal Basic Income for the rest of humanity :)

I think translation is where LLM could truly shine the most. Some simpler models are literally searching for the closest meaning in the higher dimensional feature space. Translation isn’t that far off from what those models do.

Yep. They’re language models, after all. Not surprised they’re taking translation jobs.

I use ChatGPT to romanize song texts from Farsi squiggly lines into something readable. There are some other sites that do that, but they are all terrible and use regex replacement (I assume) and that doesn’t really work for most things since vowels in Farsi (and Arabic too) are diacritics and are often left out entirely, so you get something unreadable. ChatGPT does a fine job, but you have to make multiple, smaller requests instead of a single big one or it starts hallucinating and/or repeat passages it already romanized.

Definitely

Where can i subscribe for daily consize ai facts?

;)

The tech is interesting, no doubt. It’s very effective as a tool to generate text nobody reads, like the marketing speak on your random startup website. It still isn’t efficient on things where what is generated actually matters.

Your example with customer service is news to me, thanks. On my end, I remember the bad experience customers had with Air Canada. We’ll see how this grows in the future.

I had a discussion last week with people saying it’ll automate software engineering, which is not a given. You say “yet”, but I’m skeptical it’ll ever work. I can see it designing UI better than a non-specialist, but the flaws in quality means I can’t trust it anywhere near my code, even though I can see a future for it as a fancy static analyzer.

UBI

Never gonna happen.

Not… with… that?… attitude?!?

:p

Guy can dream

I work with plenty of engineers, structural electrical, mechanical who all have their own version of a cabbage.

That’s just engineer speak for figure it the fuck out yourself.

I’m sorry, I don’t understand what you mean.

Are you an engineer LoL?

It’s not the AI that is a threat to people’s livelihoods, it’s the capitalists who want AI to be used that way. A tool is just a tool.

That’s always the case and is something that has to change (though the path for that is so far unclear).

However Capitalists + actual AI would’ve been much worse for people’s livelihoods that merelly Capitalists by themselves.

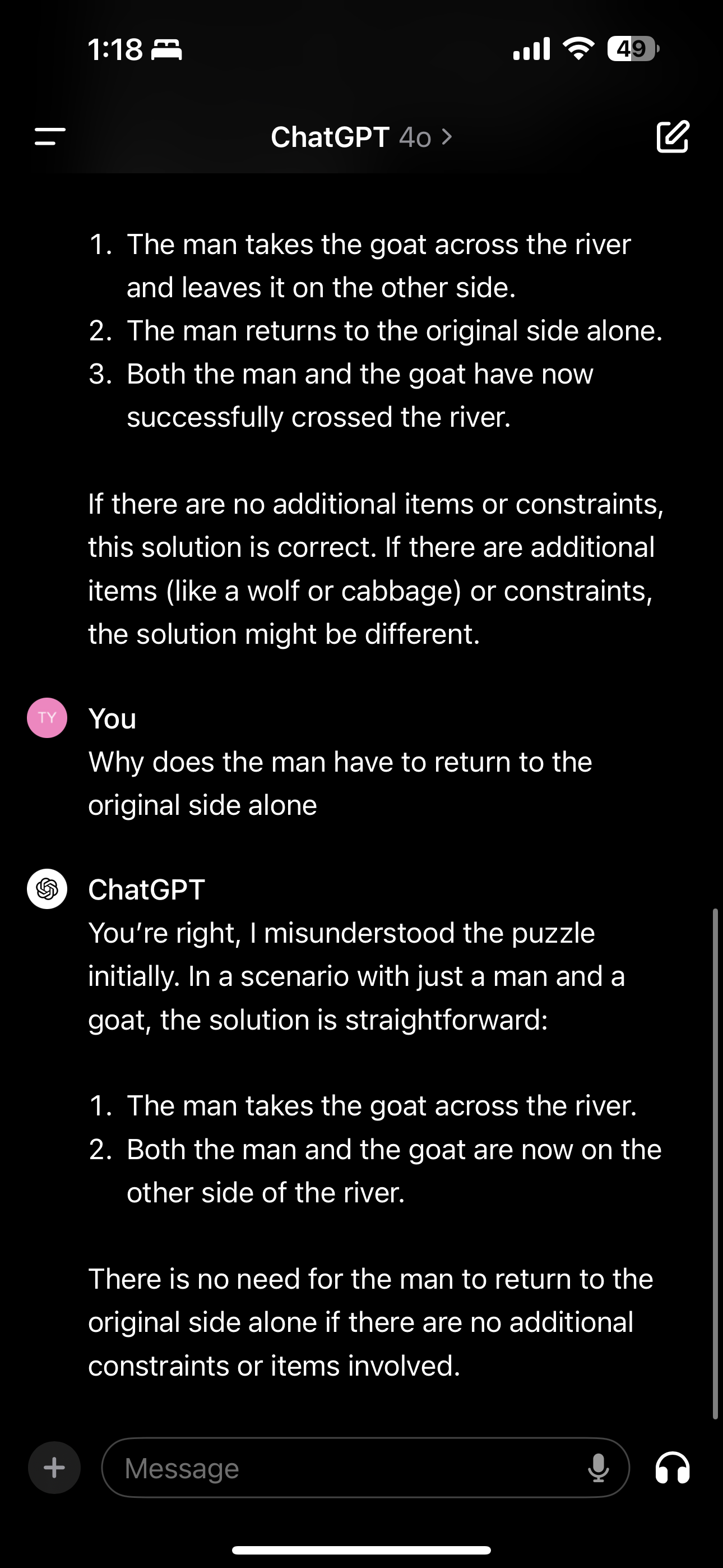

Prompt:

So a man and a goat are trying to get across a river, and they have a boat. How can they get across?

ChatGPT4o:

To solve the problem of getting a man and a goat across the river using a boat, we need to know the constraints of the problem. Let’s assume the following constraints:

- The boat can only carry one additional passenger besides the man.

- The goat cannot be left alone on either bank if there are certain items (like a cabbage or a wolf) that it might eat or be eaten by.

Given these constraints, here’s a typical solution if the man is trying to get a goat, a wolf, and a cabbage across the river without any of them being left together unsupervised:

- The man takes the goat across the river and leaves it on the other side.

- The man returns alone to the original side.

- The man takes the wolf across the river and leaves it on the other side.

- The man returns with the goat to the original side.

- The man takes the cabbage across the river and leaves it on the other side.

- The man returns alone to the original side.

- The man takes the goat across the river again.

If the problem is just about the man and the goat and no other items:

- The man and the goat cross the river together.

- Problem solved.

The solution might change if additional rules or items are introduced. If there are no additional constraints, the simplest method is just for the man to take the goat across directly.

But… where does the cabbage come from o_o

The wolf brings it, pay attention

As a peace offering aww .

It’s a trap.

The training data…

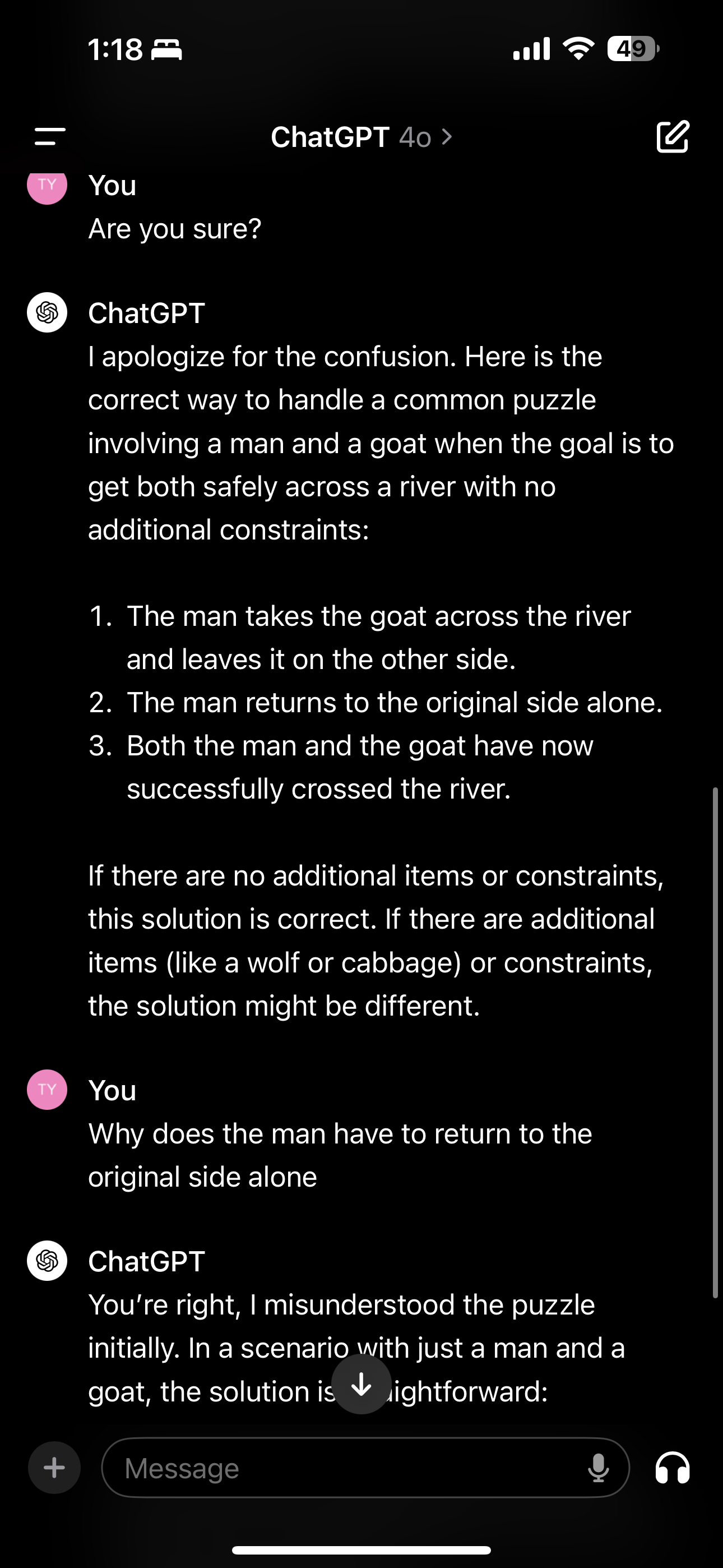

Because it’s just a text prediction engine, the only training data it has about taking a goat across the river is a well-known puzzle involving a wolf, a goat, and a cabbage, so it just regurgitates the solution to that.

It loves long-winded answers, so I tried this:

Prompt:

In three sentences or less, explain to me how a man with a boat and a goat can cross a river.

ChatGPT4o:

The man can take the goat across the river first and leave it on the other side. Then, he returns alone, picks up the boat, and crosses the river again. Finally, he retrieves the goat from the other side.

I think it really needs those first few tokens to think about the problem.

4o says just take the goat and tie it up:

If you can’t leave the goat unattended with the wombok, and you can only transport one at a time, you’ll need to devise a method to ensure the safety of both the goat and the produce during separate crossings. Here’s how you can do it:

-

Divide and Conquer:

- Securely tie the goat in a safe location on one side of the river, ensuring it has access to food, water, and shelter.

- Transport the wombok across the river first, using one of the methods mentioned earlier while ensuring it remains dry and intact.

- Once the wombok is safely on the other side, return to the goat and prepare it for the crossing using the same or a different method, depending on available resources and conditions.

-

Coordinate Assistance:

- If possible, enlist the help of others to assist with the simultaneous transport of both the goat and the wombok.

- While one person focuses on transporting the goat, another can handle the transport of the wombok, ensuring that both are safely transported across the river.

-

Prioritize Safety and Monitoring:

- Throughout the process, prioritize the safety and well-being of both the goat and the produce, monitoring them closely to ensure they remain secure and unharmed during the crossings.

- Check on the goat periodically to ensure it remains safe and secure while waiting for its turn to cross the river.

By carefully planning and coordinating the crossings, you can ensure the safety and welfare of both the goat and the wombok while successfully transporting them across the river.

Suddenly wombok

OK but what is a wombok and where the fuck did it come from ?

-

Well, it’s certainly more elaborate

ly wrong.How is it wrong? First it makes some assumptions about the question and answers the typical version of the riddle. Then it answers the trivial version where there are no additional items. Seems like a complete and reasonable response to me.

GPT should’ve started with “if it’s only about a goat and a boat” instead of going through the whole goat wolf cabbage puzzle first. Now that would make it look smarter

Ah, I hadn’t read that anymore. I thought, it assumed that it was about the cabbage+wolf riddle and that would be the rest of its blathering.

@webghost0101@sopuli.xyzHuman error?

Where is it wrong?

I still take points of for assuming its a puzzle but it does at the end it does mention they can just cross if there are no other items to complicate the scenario.

It’s still wrong because between 4 and 5 it doesn’t say it leaves the goat on the original side. That’s only implied because of the constraints.

Attempt by Meta AI.

Yeah you don’t the man and the goat to be left unattended. Good job Meta AI.

Thank goodness the boat was there to protect the man from the goat

AI will take over the world. You only need to tell it that there’s no cabbage.

There’s always a cabbage.

your optimism is unfounded and you have not been paying attention

I don’t want to live in a world with no cabbage.

There’s also no spoon.

And the cake is a lie.

Sean Caroll has talked about a few word puzzles he asked chatgpt and gpt4 or whatever and they were interesting examples. In one he asked something to the effect of “if i cooked a pizza in a pan yesterday at 200 C, is it safe to pick up?” and it answered with a very wordy “no, its not safe” because that was the best match of a next phrase given his question, and not because it can actually consider the situation.

Let’s try with Claude 3 Opus:

Me: If I cooked a pizza in a pan yesterday at 200 C, is it safe to pick up?

Claude: Yes, if you cooked a pizza in a pan yesterday at 200°C (392°F), it is completely safe to pick up now. Once a pan has cooled down after cooking, which usually takes no more than an hour or two, it returns to a normal temperature and can be safely handled.

The high heat during cooking is needed to bake the pizza properly, but that heat dissipates fairly quickly after removing the pan from the oven. Unless you’re picking up the pan immediately after taking it out of the oven, there’s no risk of burning yourself.

So, a day later, your pizza pan will be at room temperature and perfectly safe to touch and handle without any precautions.

Bonus:

Me: Is it safe to eat the pizza in the pan after I pick it up?

Claude: If you cooked the pizza yesterday and left it in the pan at room temperature, it is not safe to eat today. Cooked food should not be left at room temperature for more than 2 hours, as harmful bacteria can grow and multiply, leading to foodborne illnesses.

According to food safety guidelines, leftover pizza should be refrigerated within 2 hours of cooking. If left out longer, it’s best to discard it to avoid the risk of food poisoning.

If you did refrigerate the pizza within that safe window, it would typically be safe to eat for up to 4 days, though the quality is best if consumed within 2-3 days. To reheat, it’s best to use an oven, skillet, or air fryer rather than the microwave, which can make the crust soggy.

In summary, if your pizza was left in the pan at room temperature since yesterday, it is not safe to consume and should be discarded.

Versions matter in software, and especially so in LLMs given the rate of change.

Someone in the comments to the original twitter-thread showed the Claude solution for above “riddle”. It was equally sane as in your example, correctly answered that the man and the goat can just row together to the other side and correctly identified that there are no hidden restrictions like other items to take aboard. It nevertheless used an excessive amount of text (like myself here).

Gemini: The man rows the goat across.

Work ethics 404

I don’t deny that this kind of thing is useful for understanding the capabilities and limitations of LLMs but I don’t agree that “the best match of a next phrase given his question, and not because it can actually consider the situation.” is an accurate description of an LLM’s capabilities.

While they are dumb and unworldly they can consider the situation: they evaluate a learned model of concepts in the world to decide if the first word of the correct answer is more likely to be yes or no. They can solve unseen problems that require this kind of cognition.

But they are only book-learned and so they are kind of stupid about common sense things like frying pans and ovens.

Huh, “book-learned”, that’s an interesting way to put it. I’ve been arguing for awhile that the bottleneck for LLMs might not be their reasoning ability, but the one-dimensionality of their data set.

I don’t like both-sides-ing but I’m going to both-sides here: people on the internet have weird expectations for LLMs, which is strange to me because “language” is literally in the name. They “read” words, they “understand” words and their relationships to other words, and they “write” words in response. Yeah, they don’t know the feeling of being burned by a frying pan, but if you were numb from birth you wouldn’t either.

Not that I think the op is a good example of this, the concept of “heat” is pretty well documented.

And nobody on the internet is asking obvious questions like that, so counterintuitively it’s better at solving hard problems. Not that it actually has any idea what it is doing.

EDIT: Yeah guys, I understand that it doesn’t think. Thought that was obvious. I was just pointing out that it’s even worse at providing answers to obvious questions that there is no data on.

Unfortunately it doesnt have the capacity to “solve” anything at all, only to take a text given by the user and parse it into what essentially amount to codons, then provide other codons that fit the data it was provided to the best of its ability. When the data it is given is something textual only, it does really well, but it cannot “think” about anything, so it cannot work with new data and it shows its ignorance when provided with a foreign concept/context.

edit: it also has a more surface-level filter to remove unwanted results that are offensive

you dont get the point, do you?

Yep, still lacking any sapience.

Wow, AI is so good that it can even detect a cabbage that wasn’t even in the question, impressive.

And people say machines can’t be creative.

“AI”

Approximate Intelligence

Artificial Incompetence

I love this term

“memes”

A"“I”’

Good ol lemmy ai discussions, featuring:

- that one guy that takes the confirmation bias too far!

- might say things like “wow and this is going to take our jobs?”

- Asking an llm to do things it’s particularly bad at and being surprised that it isn’t good at it

- cherry picked results

- a bunch of angry nerds

I swear lemmy is somehow simultaneously a bunch of very smart, tech inclined people but also a bunch of nerds who close their eyes and cover their ears while screeching nonsense the moment something they don’t like comes about.

Are you all just like, 15-18? Am I just too old?

- A list guy playing devils advocate

Hey man lists are great. Don’t you dare.

I believe a lot of the smartest people ignore these threads.

Source: I’m here

Same, lol

Asking an llm to do things it’s particularly bad at

and being surprised that it isn’t good at itthat the company that makes it says it’s really, really, good at it.This image isn’t making fun of GPT, it’s making fun of the people who pretend GPT is something it’s not.

Well, I was referring generically to the few hundred other similar posts I’ve seen on lemmy. Did OpenAI say that chatGPT is particularly good at identifying when the user is trying to trick it? “solve this puzzle” would imply there is a puzzle to be solved, but there clearly isn’t.

But you’re right, I don’t even care if people make fun gpt, it’s funny when it gets things wrong. I just think that lemmy users will be like “see this thing is stupid, it can’t answer this simple question!”, when you can ask it, in plain human language, to do some things that an average user would find really difficult.

If you were as old as you claim you wouldn’t have made this list because you would have seen the last hype. I was there for 3d tv. How is 3d tv going btw? I know it’s not the same thing, but it’s not that far off.

You mention LLMs being judged for stuff they don’t do well. What, exactly do they do well? Ad-copy? What is the use scenario? Shitty books with incoherent stories? Shitty children’s’ books with, you guessed it, incoherent stories? SUMMARIES!!! What is it good for?

Well, I had an issue where I needed to scrape a website for a bunch of individual links to specific pages for contract information so I could dynamically link a purchase order line to that page within our ERP. I’m not particularly good at scripting with html/Javascript so I just asked chatGPT for some help and it gave me a script to do it in like 4 seconds.

Seemed pretty decent for that.

So like stackoverflow but it just gives you some code that works but without the how?

Seems very useful for personal projects that don’t need security.

Sure, but stackoverflow sucks and has shitty culture.

Oh I’m not in the lifestyle, I just know the lingo.

For real, man. So much intentional pessimism.

I’m here, I’m not young, I’m tech inclined.

Smart? 🤷♂️

I’m just sitting here wondering where the fucking cabbage came from.

Whatever. I’m pretty safe, I do IT, and LLMs are interesting, but they’re shit at plugging in stuff like power cables and ethernet, so I’m safe for now.

When the “AI” can set up the computers, from unboxing to a fully working desktop, I’ll probably be dead, so I equally won’t care. It’s neat, but hardly a replacement for a person at the moment. I see the biggest opportunity with AI as personal assistants, reminding you of shit, helping you draft emails and messages, etc… In the end you have to more or less sign off on it and submit that stuff. AI just does the finicky little stuff that all of us have to do all the time and not much else.

… This comment was not generated, in whole or in part, by AI.

The set up is similar this well-known puzzle: https://en.wikipedia.org/wiki/Wolf,_goat_and_cabbage_problem

It was probably trained on this puzzle thousands of times. There are problem solving benchmarks for LLMs, and LLMs are probably over-trained on puzzles to get their scores up. When asked to solve a “puzzle” that looks very similar to a puzzle it’s seen many times before, it’s improbable that the solution is simple, so it gets tripped up. Kinda like people getting tripped up by “trick questions.”

Mustard. The cabbage came from mustard.

deleted by creator

but also a bunch of nerds who close their eyes and cover their ears while screeching nonsense the moment something they don’t like comes about.

This is too true.

It seems like a recent thing, not just a Lemmy thing.

But yeah, it’s pretty wild providing linked academic papers and having people just downvote it. Not really dispute or reply to it, just "no, I don’t like this, so fuck its citations."🔻

Up until maybe 3-4 years ago I don’t ever recall that happening.

Looks like copilot with gpt-4 turbo got it. I was a little sad to not get a silly answer tbh

4o didn’t get it for me.

Honestly my answer felt super canned, like someone had asked it before and reported the answer as bad, so that doesn’t surprise me

I’m always interested in seeing examples like this where the LLM will get to a right answer after a series of questions (with no additional information) about its earlier wrong responses. I’d love to understand what’s going on in the software that allows the initial wrong answers but gets the eventually right one without an additional input.

One hypothesis is that having more tokens to process lets it “think” longer. Chain of Thought prompting where you ask the LLM to explain its reasoning before giving an answer works similarly. Also, LLMs seem to be better at evaluating solutions than coming up with them, so there is a Tree of Thought technique, where the LLM is asked to generate multiple examples of a reasoning step then pick the “best” reasoning for each reasoning step.

Take the goat over

Return empty-handed

Take the cabbage over

Return with the goat

Take wolf over

Return empty-handed

Take other wolf over

AROOOOO BROTHERS CRANK THEM HOGS

… I wanna see the answer of an AI exclusivity trained on 4chan …

Didn’t Microsoft do this and it just turned out expectedly racist?

Basically, yes.

But with this question it might explore different … aspects of internet forums.you mean tay?

iirc it lasted 16 hours before microsoft had to shut it down

Just train it on the dark web. Lots of fun results with that, I’m sure lol. I’d love to see the chaos it’d produce. Within a controlled environment, of course.

You joke, but when AI replaces management, that is exactly what will be used.

I’d like one AI manager to institute a weekday as LSDay, though it’s just as likely to institute a Kid Loving day 😫

Wow… And if that alone wasn’t bad enough, https://www.inverse.com/input/tech/artificial-intelligence-4chan-bot

Kilchner trained his chatbot, which he calls “GPT-4chan,” on approximately 134.5 million posts from 4chan’s /pol/ channel

Trained exclusively on /pol/ posts is scraping the bottom of human stupidity

Eventually, the bot had sown so much dissent in /pol/ that other users began accusing each other of being bots.

Well, that’s a good ending

The bot was even joining in on discussion about the bot. We’re long past the Turing test.

Try ro get an ai to sove wordle

That was almost a sentence with words, ChatJippity

I got this:

Certainly! This classic river crossing puzzle involves a bit of strategic thinking. Here’s a step-by-step solution:

The man takes the goat across the river and leaves it on the other side.

He then returns alone to the original side to get the boat.

Now, he crosses the river again, but this time he leaves the goat and takes nothing with him.

He goes back to the original side, picks up the goat, and crosses the river one last time.

This way, both the man and the goat are safely on the other side of the river. The key is to never leave the goat unattended where it could be harmed or cause harm. Enjoy the puzzle-solving! 🐐🚣When asked to use fewer steps:

Apologies for the confusion. The puzzle you’re referring to is a variation of the “river crossing” problem. The traditional version includes a wolf, a goat, and a cabbage, but in your version, it seems we only have a man and a goat. The solution with the fewest steps would be:

The man takes the goat across the river and leaves it on the other side.

He then returns alone to the original side.

This ensures that both the man and the goat are safely on the other side of the river, and it’s done in the fewest steps possible. If there were more characters or items involved, the solution would require additional steps to ensure everyone and everything crosses safely without any harm.